Why should children understand the AI to use it better?

A pilot program from the MIT Media Lab teaches children how to develop an algorithm so they can understand better the possibles biases generated. Knowing how artificial intelligence affects society can help them realize that the technology around them is not neutral.

A student summarizes how he would describe artificial intelligence (AI) to a friend: “It is like a baby or a human brain, because it has to learn,” he explains in a video, “and it stores […] and uses that information to solve things ».

Most adults would have a hard time coming up with such a convincing definition of a fairly complex topic. At just ten years old, this student was one of 28 participants, ages 9 to 14, in a pilot program held last summer and designed to teach them AI.

The curriculum plan, developed by MIT Media Lab (USA) graduate research assistant, Blakeley Payne, is part of a broader initiative to bring these concepts comprehensively into school classrooms. The plan, which is open access, includes several interactive activities that help students discover how algorithms develop and how those processes affect people’s lives.

Today’s children grow up in a world surrounded by AI: algorithms determine what information they see, help them choose the videos they watch, and influence how they learn to communicate. It is hoped that by better understanding how algorithms are created and how they affect society, children can become more critical users of this technology. It could even motivate them to help shape their future.

“It is essential that they understand how these technologies work so that they can better use them,” emphasizes Payne. “We want them to feel empowered.”

Why use children?

There are several reasons to teach AI to children. First, economically: several studies have shown that exposing children to technical concepts stimulates their problem-solving skills and critical thinking. This can prepare them to learn computer skills faster throughout their lives.

Second, there is a social argument. The primary and secondary school years are particularly important in the formation and development of children’s identity. Teaching technology to girls at this age can prepare them to study it later or pursue a career in technology, says Jennifer Jipson, professor of psychology and child development at California State Polytechnic University.

This could help diversify the AI and technology industry in general. Learning to deal with the ethics and social impacts of technology early on can also encourage children to become more conscientious creators and developers, as well as better informed citizens.

Finally, there is the problem of vulnerability. Young people are easier to mold and impress, so the ethical risks of tracking people’s behavior to design more addictive experiences are more acute for them, according to the professor of student-centered design at University College London (UK) Rose Luckin. Making children passive consumers could harm their privacy and long-term development.

“Between 10 and 12 years is the average age when a child receives their first mobile phone or their first social media account,” says Payne. “We want them to really understand that technology represents opinions and goals that do not necessarily coincide with their own, before they become greater consumers of technology.”

What is the opinion about the algorithms?

Payne’s curriculum includes a series of activities that encourage students to think about the subjectivity of algorithms. They begin by learning about them as if they were recipes, with input information, a set of instructions, and a result. The children are then asked to “build” or write the instructions to come up with an algorithm that will generate the best peanut butter and jelly sandwich.

Quickly, the children in the summer pilot program began to understand the underlying lesson. “A student asked me, ‘Is this supposed to be an opinion or a fact?'” He recalls. Through their own discovery process, the students realized how they had inadvertently incorporated their own preferences into their algorithms.

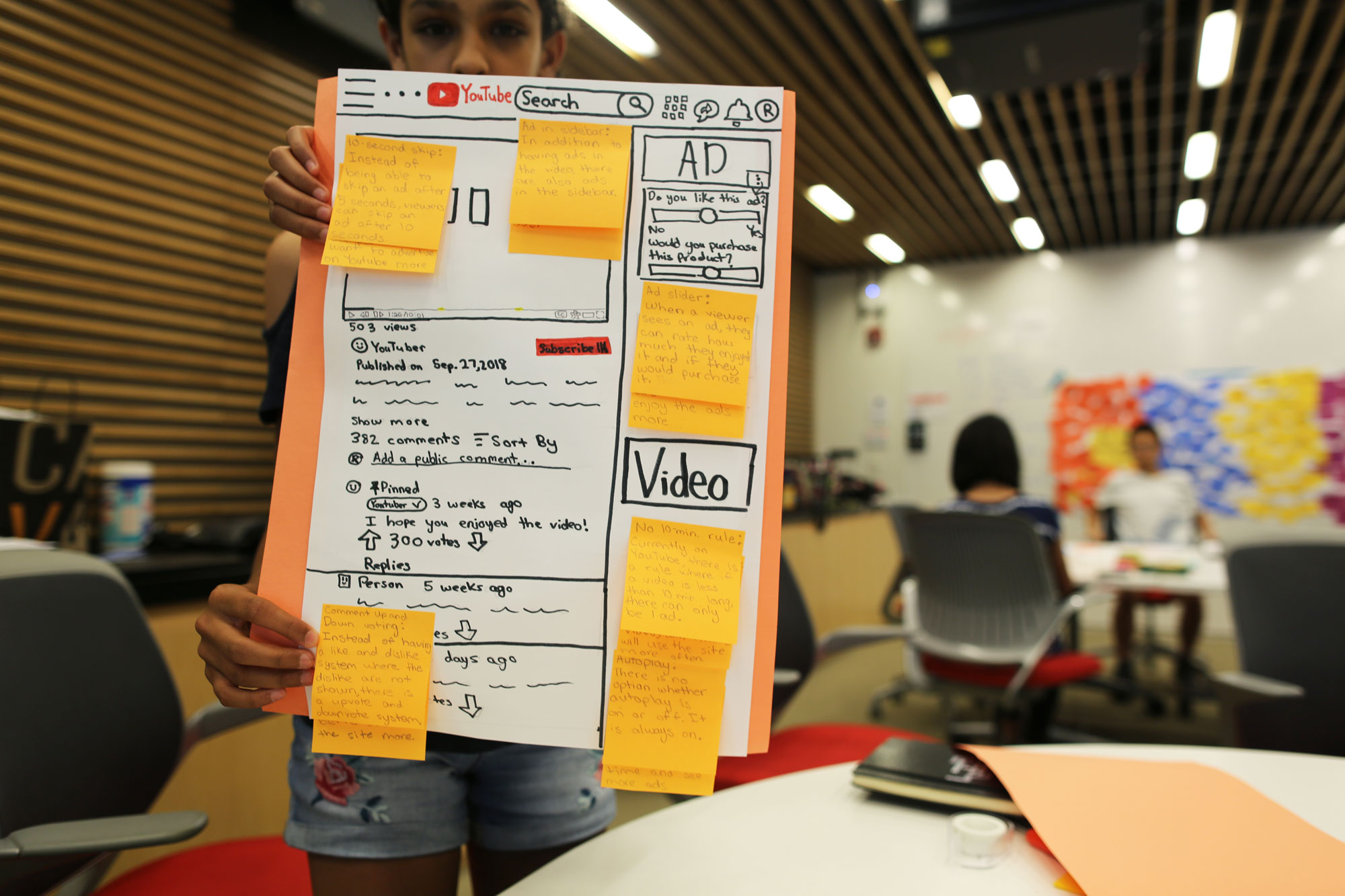

The following activity builds on that concept: Students draw what Payne calls an “ethical matrix” to think about how different stakeholders and their values can also affect the design of the best sandwich algorithm. During the show, Payne related the lessons to current events. The students read together a Wall Street Journal article about how YouTube executives were planning to create a separate version of their app just for kids with a modified recommendation algorithm. The students were able to see how investor demands, parental pressure, or children’s preferences could convince the company to redesign its algorithms in completely different ways.

Another set of activities teaches students the concept of algorithmic bias. They use Google’s Teachable Machine tool, an open source interactive platform to train basic machine learning models and to develop a classifier between cats and dogs. However, they unknowingly receive a skewed data set. Through a process of experimentation and discussion, they realize that the data set leads the classifier to be more accurate with cats than with dogs. Then they have the opportunity to correct that problem.

Once again, Payne connected that exercise with a real-world example by showing students images of MIT Media Lab researcher Joy Buolamwini speaking to Congress about facial recognition biases. “They were able to see how the kind of thought process they went through could change the way these systems are created in the world,” Payne explains.

Towards the education of the future

Payne plans to continue refining the program, taking into account feedback from participants, and is exploring several avenues to expand its reach. Its objective is to introduce some version of it in public education.

Beyond that, he hopes it will serve as an example to educate children in technology, society and ethics. Both Luckin and Jipson agree that this provides a promising foundation for how education could evolve to meet the demands of an increasingly technology-driven world.

“AI as we see it in society right now is not a great equalizer,” concludes Payne. “Education is, or at least, we hope it is. So this is a fundamental step to move towards a more just and equitable society ”.

Source: https://www.technologyreview.es/s/11469/por-que-los-ninos-deben-comprender-la-ia-para-usarla-mejor

Related Post: